It appears that evidently Google is doing it once moreAnd, in fact, it’s AI.

In line with the stories, the corporate’s Google Cloud program is facilitating a hare mind scheme to make use of AI to catch “mules” and smugglers on the southern border of the southern United States. Whereas Google’s personal companies should not getting used, it’s proper in the midst of issues the place all the cash is.

Google ought to know higher.

Android & Chill

One of many quickest -duration of the net, Android & Chill It’s your dialogue on Saturday about Android, Google and the whole lot associated to know-how.

What is going on right here?

I’ll begin with a quick explanatory for people who find themselves not within the US. And I would ask myself what all this uproar is.

Individuals who arrive illegally to the USA by way of the southern border are some of the polarizing issues in the USA. Half of the nation hates him, and half of these folks hate even the authorized immigration of individuals from one other tradition. The opposite half is aware of that it’s a drawback, however hates the primary half of the folks sufficient to faux that it’s not. Sure, it is silly, nevertheless it’s what it’s.

With that out of the way in which, that is what is going on and the way Google will get concerned. In accordance The intersectionUS Customs and border safety plans to resume some previous surveillance towers round Tucson, Arizona.

They need to set up tools that use the IA to determine every individual and car that approaches the border. That is positive, and even my low-cost Nido Chamber You are able to do it. However, there’s at all times a however, they need to use IBM most inspection software programwhich is mostly positioned in manufacturing unit machines to do high quality management inspections, to find folks with backpacks or appear to make some crimes or one thing.

Google (and Amazon, in fact) is supposedly facilitating this by offering housing companies for knowledge flows and instruments to coach AI. Certainly there’s some huge cash concerned, and Google is thirsty for it.

A couple of years in the past, Thomas Kurian, Google Cloud CEO, stated the corporate I used to be not going to do something To assist create a “digital edge wall”, however that was then, and that is now. Android Central communicated with Google for a remark in your participation within the venture and can replace this text once we obtain a solution.

However let’s be clear: Google shouldn’t be offering any of your individual AI instruments For all this to occur, so even whether it is concerned, it’s not to be immediately unhealthy. It’s only benefiting from that.

Google is aware of higher

It’s possible you’ll be asking, “Jerry, why does the border shield one thing fallacious??” Is No. It’s positively not 100% not evil in any manner or kind. A rustic wants to have the ability to management how folks enter and are available out and what they do. I’m not a part of the half that hates the opposite half sufficient to cover my head within the sand.

However there’s a right manner and an incorrect manner. First, you’ll be able to’t dehumanize individuals who simply need a greater life. That is the best level of debate among the many inhabitants of the USA, and should admit that the present political administration has stated and completed some fairly dehumanizing issues.

Doing something to assist here’s a unhealthy public relations motion for any firm, a lot much less considered one of Google measurement. The optics of that is horrible, and most of the people who work for Google and use their merchandise won’t like them. Better of all, Web know-how web sites will make sure that everybody is aware of; It’s what we do greatest.

It would in all probability not work, as a result of ai

The opposite drawback is that Google is aware of It won’t work However it’s in all probability nonetheless salivating to take part and accumulate some huge cash from taxpayers for lodging companies. After all, it doesn’t need to be answerable for the issues and immediately make the evil shit, however you’ll be able to sit and look, cross and accumulate your cash.

AI could be skilled to seek out folks with backpacks. That’s in all probability easy to do; tedious and gradual, however Coaching an AI As a result of that is potential right now. So what?

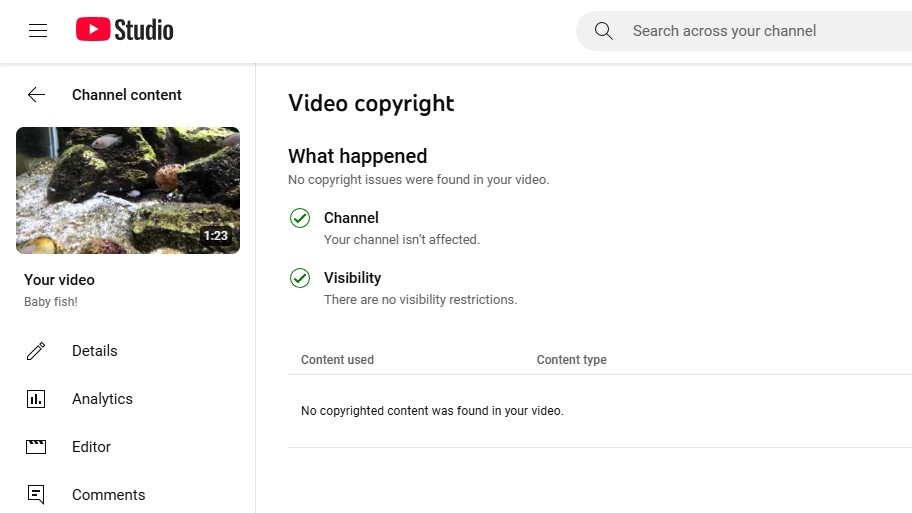

You aren’t naive sufficient to assume that some unhealthy issues won’t occur to anybody directed by a super-thero of AI edges. Google positively It’s not. Google makes use of AI to watch YouTube (together with different issues), and even that may be a good religion catastrophe that Google can not management. If AI can not be taught to detect what’s protected by copyright and what’s not ok to do something To a human being.

The very best factor that might occur is {that a} truck stuffed with actual human brokers intercepte the objective. I cannot speculate within the worst case. Even so, the unhealthy boy shall be caught. Harmless folks may even be trapped.

I would not go searching or backpack round Tucson if it had been you.

Assuming that Google is absolutely concerned, shouldn’t be in evil and is solely fulfilling one other authorities housing contract. It’s only one that’s linked to a division drawback deeply politically, and the corporate ought to know higher.

It will be the identical if Google developed a web-based repository to assist register and monitor every weapon in the USA: half of the nation would go loopy, and the opposite half would say it’s nice. It’s not nice, it’s silly, and there are various alternative ways to acquire that cash from the federal government.